You can use Amazon Elastic File System (EFS) as a shared file system between your Amazon EC2 instances to benefit from its scalability and durability. You can simply deploy your files to an Amazon EFS file system and mount it on dozens of Amazon EC2 instances in the same VPC. Then, you only need to deploy to your EFS file system when you have an update.

Now, what about automating your deployments to Amazon EFS? AWS CodePipeline does not have a deploy action integrated with Amazon EFS file systems. However, your AWS CodeBuild containers can mount EFS file systems. So you can copy your files and folders directly to your EFS file system after building them. In this post, I will talk about creating CI/CD pipelines using AWS CodePipeline and CodeBuild to build and automate your content to Amazon EFS file systems.

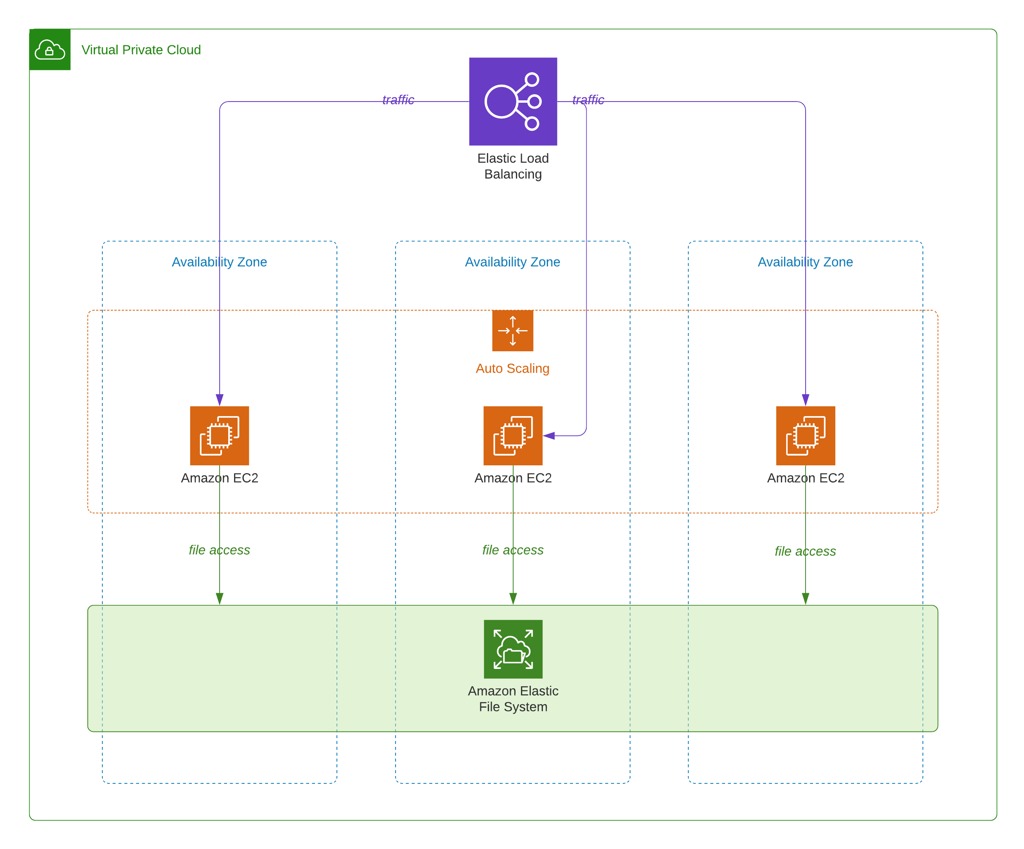

Sample AWS architecture

First of all, let’s talk about the architecture that we will deploy. If you use an EFS file system, you should have multiple EC2 instances sharing it to make sense. Besides, you may have an Elastic Load Balancer to distribute the traffic to your EC2 instances. Scaling your instances with an EC2 auto scaling group would also be wise in terms of reliability and scalability. So our sample architecture for this post will be like below. Your EC2 instances may have access to an Amazon RDS instance as well, but it will be beyond the scope of this post. We will only deal with application deployments and use a sample Angular application as an example.

Using AWS CodeBuild to deploy to Amazon EFS

AWS CodePipeline does not have a deploy action for Amazon EFS like Amazon S3. However, Amazon EFS file systems are NFS file systems and they can be mounted by your AWS CodeBuild containers as well. However, in order to successfully mount and deploy to an Amazon EFS file system, your CodeBuild projects should be configured correctly.

1) Your build containers should be configured to run in private subnets inside the same VPC with your Amazon EFS file system. CodeBuild containers cannot run in public subnets.

2) As you will run your build containers in a VPC, you should provide Internet and EFS file system access to them with standard VPC tools. Now, let me list the basics you should take care of below.

Although CodeBuild containers run in private subnets, they need access to the Internet to download packages from S3 or 3rd party websites. So you should create a NAT gateway and route Internet requests (0.0.0.0/0 CIDR) from your private subnets via this NAT gateway.

The security group of your EFS file system must allow inbound access to the security group of your CodeBuild containers.

The private subnets you configured for your CodeBuild project should include all availability zones containing the mount targets of your EFS file system. Your build container will access the EFS file system using the mount targets in the same AZ.

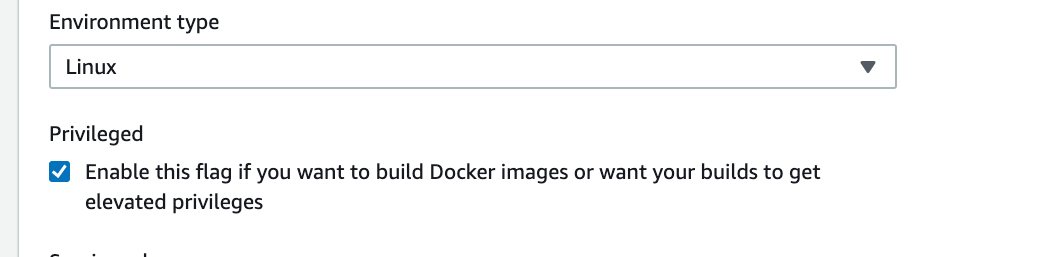

3) Your AWS CodeBuild project should run in privileged mode. Privileged mode grants a build project’s Docker container access to all devices. So it allows access to the network interfaces for inner VPC communication as well.

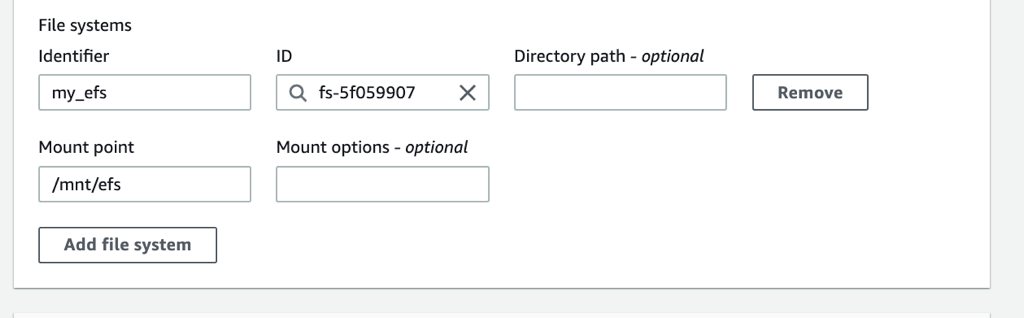

4) After these, you can add your EFS file system in the File system section of your build project by defining an identifier for it and mount point for it.

Your EFS file system will be mounted from the mount point you provide. Besides, AWS CodeBuild will provide this mount point with an environment variable named by concatenating CODEBUILD_ and the file system identifier in uppercase. You can access your files in your file system using this environment variable or directly using the mount point. In the example above, the identifier is my_efs and the mount point is /mnt/efs. So CODEBUILD_MY_EFS environment variable will be provided to your build containers that will be set to /mnt/efs.

AWS CodePipeline pipeline choices and aligning the buildspec file accordingly

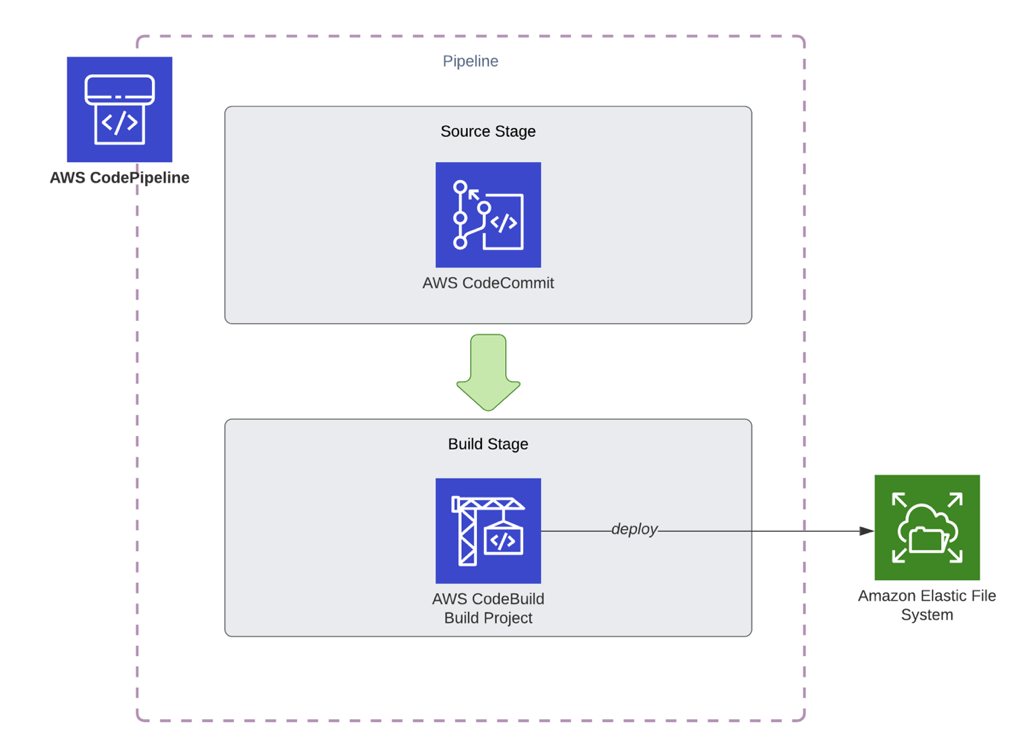

We talked about how to mount an EFS file system from a CodeBuild build container. Next, let’s talk about creating pipelines using AWS CodePipeline to build and deploy to EFS automatically in each execution.

Option 1) A simple pipeline: Use the same build project to build and deploy

As you know, AWS CodeBuild has different phases for the life cycle of a build project. So you can build the code in the build phase, and copy the files and folders generated by the build to your EFS file system in the post_build phase. Then your buildspec might be something like below.

version: 0.2

phases:

install:

runtime-versions:

nodejs: 12

commands:

- npm install -g @angular/cli@9.0.6

pre_build:

commands:

- npm install

build:

commands:

- ng build --prod

post_build:

commands:

- echo "Copying files"

- cp -r dist/my-angular-project $CODEBUILD_MY_EFSIn this example, Angular’s build command places the artifacts inside ‘dist/my-angular-project’, and in the post_build phase, we just copy all files recursively to the mount point.

Besides, in this case, our pipeline will be simple, containing only an AWS CodeCommit source action and an AWS CodeBuild build action.

However, this pipeline is not ideal. Because your build and deploy processes are in the same build project. Besides, you cannot define stages to deploy to a pre-production environment like test or staging before your production deployments to test or see your changes. So let’s enhance this pipeline in the next option.

Option 2) A more ideal pipeline: Use different CodeBuild projects for builds and deployments

While building pipelines, I recommend separating your builds and deployment projects if you use CodeBuild for both. Because you can redeploy the same build artifact to different environments like staging and deployment. You can even place test action for your automated tests or a manual approval action between these environments.

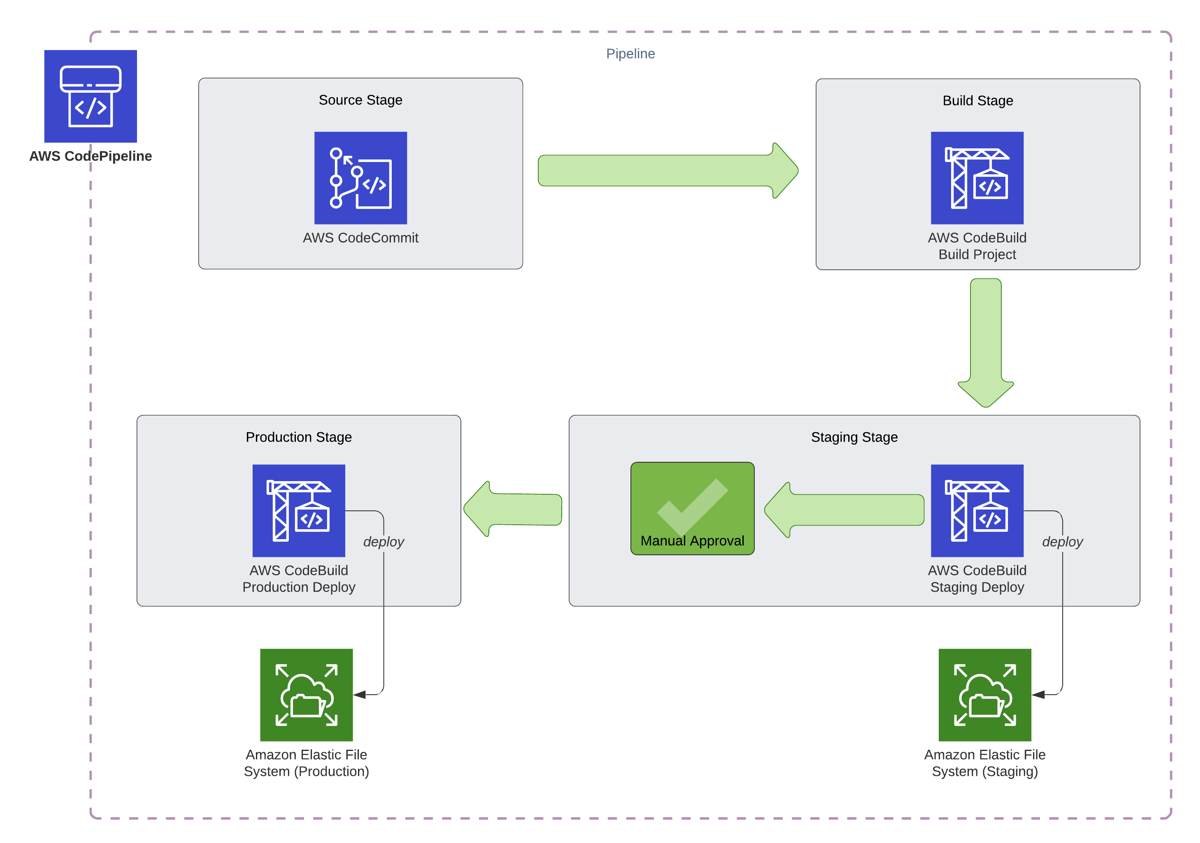

For example, let’s assume that we have a staging environment mounting a staging EFS file system inside a staging VPC. We also have a production environment likewise, a production EFS file system in a production VPC. Then, our pipeline will be similar to below.

As you see, we have three different AWS CodeBuild actions in the pipeline. All CodeBuild actions will launch different build containers from three different CodeBuild projects.

1) AWS CodeBuild project for builds

This will be a regular CodeBuild project to build our code just like we deploy to S3 or EC2. In the end, the build container will create a build artifact containing the deployable code. There is no need to mount the EFS file system in this project. So we do not need to do any VPC or other configurations for it. Hence, its buildspec file, buildspec.yml, would be similar to the one below.

version: 0.2

phases:

install:

runtime-versions:

nodejs: 12

commands:

- npm install -g @angular/cli@9.0.6

pre_build:

commands:

- npm install

build:

commands:

- ng build --prod

artifacts:

base-directory: dist/my-angular-project

files:

- '**/*'2) AWS CodeBuild project for staging deployments

We need to use a separate buildspec file for our deployments because it will not contain the build commands. However, we can use the same buildspec file for staging and production deployments, but configure the settings for the environments on the corresponding build projects. Because the staging deployment should only mount the staging EFS file system from the staging VPC.

So our deployment buildspec, let’s say deploy_buildspec.yml for it, can be like below.

version: 0.2

phases:

build:

commands:

- echo "Copying files"

- cp -r . $CODEBUILD_MY_EFSHere, we configure the identifier of the file system as my_efs. It will just copy the contents of the input artifact it receives from the AWS CodeBuild project for builds we defined above to the EFS file system mounted recursively.

Besides, you should configure the staging build project as follows.

It should run in private subnets in the staging VPC. Also, its networking configurations should be made as explained at the beginning of this post.

It should mount the staging EFS file system.

3) AWS CodeBuild project for production deployments

The CodeBuild project for the production deployments will use the same buildspec file (deploy_buildspec.yml) as the staging deployment CodeBuild project, and it will take the build artifact produced by the AWS CodeBuild project for builds as input. However, you should configure it to access only the production environment.

It should run in private subnets in the production VPC. Likewise, its networking configurations should be made as explained at the beginning of this post.

It should mount the production EFS file system using the same identifier with the staging project,

my_efsbecause we use theCODEBUILD_MY_EFSenvironment variable in our deployment command. However, you can provide a different mount point as the environment variable will be used to access it.

Conclusion

As you see, you can use AWS CodePipeline and AWS CodeBuild to automate your deployments to your Amazon EFS file systems. In this post, we talked about two different scenarios: a simple pipeline with a single environment and a more complex pipeline with different environments. In simple terms, we use AWS CodeBuild as a command-line tool to mount and copy the files to EFS file systems. Using this information, you can customize your pipelines as you like.

Thanks for reading!

Would you like to learn AWS CodePipeline and CodeBuild?

If you would like to learn how to use AWS CodePipeline to create CI/CD pipelines to deploy to EC2 and S3, you can join my AWS CodePipeline Step by Step course on Udemy with a special discount by clicking the link below.

https://www.udemy.com/course/aws-codepipeline-step-by-step/?couponCode=SHIKISOFT-LEARN-2605

Alternatively, use the coupon code SHIKISOFT-LEARN-2605 during the checkout.

Although we do not cover the EFS deployment use case we discussed here, you can still learn how to use AWS CodePipeline with AWS CodeCommit, CodeBuild, and CodeDeploy in detail to create pipelines on AWS. In this example, we used CodeBuild as a command-line tool, and we make different examples with it in the course.

Hope to see you in AWS CodePipeline Step by Step!