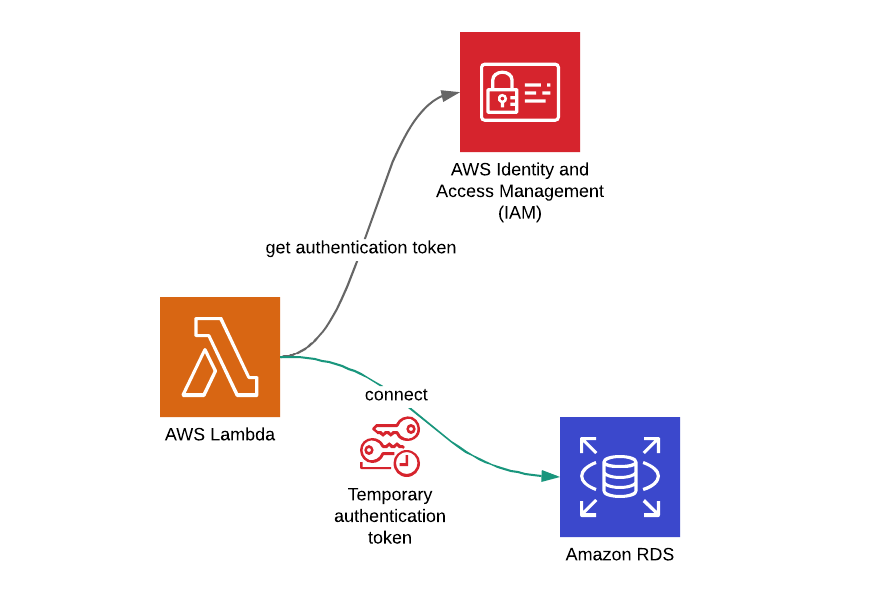

Where do you keep your DB credentials in your AWS Lambda functions that you use to access your Amazon RDS databases? Hardcoded? No! Environment variables? Well, you might be using AWS Secrets Manager, right? It would be great! But did you know that you can also use the IAM role of your AWS Lambda function and its temporary credentials to do this?

Yes, there are different ways to manage your database credentials in your AWS Lambda functions. In this post, I will talk about one of the most secure methods, using IAM authentication of Amazon RDS, with an example of an AWS Lambda function developed in Python. I will use an Amazon RDS - MySQL DB instance, but IAM authentication is also supported on PostgreSQL DB instances as well.

Why Use IAM Authentication on Amazon RDS?

The reason to enable and use IAM authentication on Amazon RDS is because it is the most secure method in theory. When you create an IAM role for your AWS Lambda function, it rotates its credentials automatically in small intervals. Considering this to keeping the credentials in an environment variable which you do not rotate often, it is no brainer that this method is more secure.

IAM is the central unit of authentication and access management on AWS. You can define a user on your database and associate it with an IAM policy and attach this policy to the IAM roles that need the same level of permissions. So you manage your DB credentials along with others on IAM, in a single platform.

But using IAM authentication on RDS is not perfect. You may have scalability problems, because the number of connections is limited to 256 connections per second when you use this method. If you need more connections, using Amazon RDS integration with AWS Secrets Manager would be more appropriate. We can discuss that method in other blog posts as well.

By the way, currently IAM authentication is supported only on MySQL and PostgreSQL databases. If you run SQL Server or Oracle on RDS, unfortunately you cannot use this feature with those database engines.

Now let’s talk about the steps to configure IAM autentication correctly on an Amazon RDS MySQL database instance.

Configuring Amazon RDS MySQL Database for IAM Authentication

Enabling IAM AUthentication on Amazon RDS

I do not know the reason behind this, but by default, IAM authentication is disabled when you create a new Amazon RDS DB instance. Hence, you should enable it via AWS Management Console or using your AWS CloudFormation template.

In your AWS CloudFormation templates

If you use AWS CloudFormation to create your DB instances, it is very simple. The only thing you need to do is to define EnableIAMDatabaseAuthentication property and set it to true as below.

...

DbInstance:

Type: AWS::RDS::DBInstance

Properties:

...

EnableIAMDatabaseAuthentication: true

...On AWS Management Console

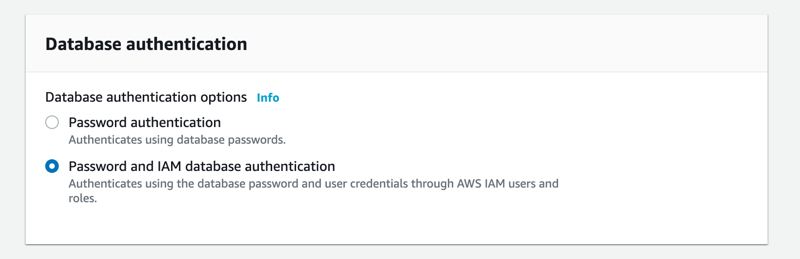

If you use AWS Management Console, it is also straightforward. You can enable IAM authentication while creating your DB instance under Database Authentication section as below.

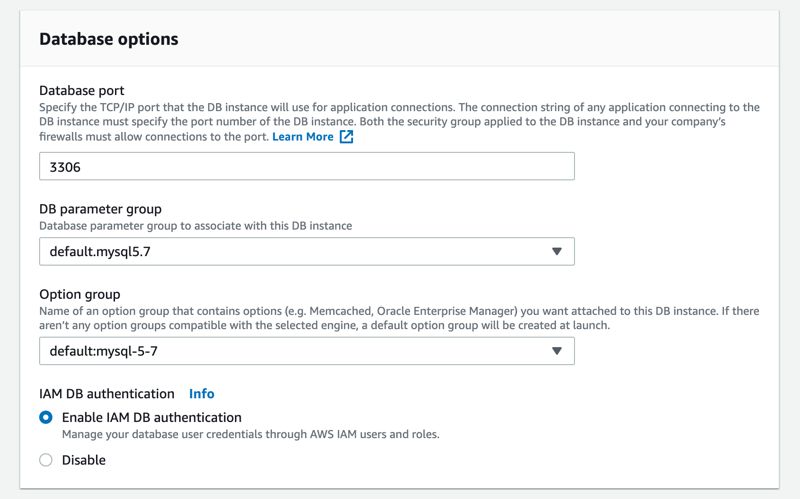

If you have an existing MySQL or PostgreSQL DB instance on Amazon RDS. you can enable IAM authentication by modifying your DB instance, too. You can select the option under Database Options -> IAM DB Authentication as below.

MySQL DB Configurations

Creating a DB User Authenticated by Amazon RDS Token Authentication

After enabling IAM authentication on your Amazon RDS DB instance, you should connect to it as the master user and create a new DB user which uses the AWSAuthenticationPlugin.

mysql> CREATE USER 'lambda-user'@'%' IDENTIFIED WITH AWSAuthenticationPlugin as 'RDS';Here we create a DB user named lambda-user on our MySQL DB instance and we do not assign a password to him/her. Instead, we configure this user to be identified by this plugin. This authentication plugin will already be installed and enabled on your Amazon RDS DB instance.

Assigning Priveleges to lambda-user

Then you should grant any privileges you like as long as it meets the minimum requirements needed by the logic of your AWS Lambda function.

mysql> GRANT SELECT ON iamtest.* TO 'lambda-user'@'%';For example, in my example, I have a database named iamtest and I only grant SELECT privileges on all tables of this database.

Creating tables and data for testing

After user setup and assigning privileges, I also create an items table and add 3 rows to use in my examples. But I will not list them here, because they are only for testing my AWS Lambda function. You can create any table or do any logic in it. Let’s focus on IAM authentication in this blog post.

Creating a SAM Template and Developing an AWS Lambda Function

We talked about AWS Serverless Application Model in a previous blog post. In this post, I will use SAM again to create my AWS Lambda function. But this time, let’s use Python 3.7 instead of Ruby as runtime.

sam init --name rds-iam-sample-app --runtime python3.7This creates my folder structure using SAM templates. Then I will edit my template.yaml and define some parameters. By the way, I started using AWS CloudFormation long before its child, AWS SAM, was born. So I love template parameters, because they allow me to re-use the templates in different scenarios.

So my template.yaml file until the Resources section becomes like this.

AWSTemplateFormatVersion: '2010-09-09'

Transform: AWS::Serverless-2016-10-31

Description: >

SAM template for RDS - IAM authentication example from AWS Lambda

Parameters:

LambdaSg:

Description: "Lambda security group ID"

Type: AWS::EC2::SecurityGroup::Id

LambdaSubnets:

Description: "Lambda subnets"

Type: List<AWS::EC2::Subnet::Id>

DBResourceId:

Type: String

Description: "RDS-DB instance resource identifier"

DBEndpoint:

Type: String

Description: "Amazon RDS MySQL endpoint"

DBName:

Type: String

Description: "Name of the database connected"

DBUsername:

Type: String

NoEcho: trueBefore proceeding, let me explain these parameters shortly:

LambdaSgandLambdaSubnetsare for configuring theVPCproperties of my AWS Lambda function. If you follow this blog, you already know from the previous posts that we need to attach a security group to our AWS Lambda functions which has access to our RDS DB instances. Otherwise, they cannot access the database. Also, we need to provide the VPC subnets that our AWS Lambda functions will be launched into.DbEndpoint,DbNameandDbUsernameare needed for the function to create the database connection. As you see, we do not have any password related parameter here. Because we will use IAM authentication.DbResourceIdis different than DB instance identifier. We will use it in the definition of the IAM policy that will grant DB access privileges to our AWS Lambda function role. We will also useDbUsernamewhile doing this.

AWS Lambda Layer for Dependencies

In this project, I would like to define an AWS Lambda layer to place my dependencies. To achieve this, we need to create this folder structure under my root directory. Because we are developing a Python function, the folder structure must be like this. This is a requirement of AWS Lambda Layers.

cd rds-iam-sample-app

mkdir -p dependencies/pythonThen I create a requirements.txt file and define only pymysql in it.

pymysqlNext I change my directory to dependencies/python and install the required packages using pip3.

pip3 install -r requirements.txt -t .This command installs the libraries in the requirements.txt into the current folder. So in our case, it installs pymysql which we will use to create connection to the DB instance from our AWS Lambda function.

Then we will define a DependenciesLayer resource in our template.yaml file.

...

Resources:

DependenciesLayer:

Type: AWS::Serverless::LayerVersion

Properties:

LayerName: !Sub "${AWS::StackName}-Dependencies"

ContentUri: dependencies/

CompatibleRuntimes:

- python3.7

...Here I use the intrinsic Fn::Sub to name the layer using the stack’s name. So if I reuse the template for another environment in the same AWS account and region, I will have conflicts while creating the stack.

Downloading the RDS SSL certificate bundle

To use IAM authentication, the request should use SSL. We will talk about this below, but firstly, we need to download the SSL certificate bundle from this link as described on Amazon RDS documentation.

This certificate will not be specific to our AWS Lambda function. So it will be wiser to include it in our DependenciesLayer. To do this, we need to download the certificate and place under dependencies/python folder. The folder structure looks like below.

--dependencies/

----python/

------pymysql/

------PyMySQL-0.9.3.dist-info/

------rds-combined-ca-bundle.pemrds-combined-ca-bundle.pem is the certificate bundle that contains both intermediate and root certificates to connect to an Amazon RDS DB instance.

AWS Lambda Function Definition in The Template

Now I will continue with the function definition. I will not change the function name and the name of its folder, but you should if it is a production-level application.

...

HelloWorldFunction:

Type: AWS::Serverless::Function

Properties:

CodeUri: hello_world/

Handler: app.lambda_handler

Runtime: python3.7

Environment:

Variables:

DB_HOST: !Ref DBEndpoint

DB_USER: !Ref DBUsername

DB_NAME: !Ref DBName

Layers:

- !Ref DependenciesLayer

Policies:

-

Version: '2012-10-17'

Statement:

Effect: Allow

Action:

- rds-db:connect

Resource:

- !Sub "arn:aws:rds-db:${AWS::Region}:${AWS::AccountId}:dbuser:${DBResourceId}/${DBUsername}"

...CodeUriis the folder containing the code. The contents of this folder will be in my AWS Lambda function in runtime.Handleris the name of the Python method that will be invoked during the function calls.- We pass

DbEndpoint,DbUsernameandDbNameparameter values asDB_HOST,DB_USERandDB_NAMEenvironment variables respectively. - In

Layersproperty, we provide my new Lambda layer. So the contents of its Python folder will be imported in our function runtime. Policiesis where we assign the necessary permissions to access the DB instance. Now let’s talk this property separately.

Policy to allow RDS DB access

In this policy, we allow rds-db:connect action. This action is different than the namespace rds which grants permissions for administrative tasks.

In addition, we use Fn::Sub function to construct the resource that is allowed to access. Please note that, although it starts with arn it is not the arn of the RDS directly. So the format of this resource is crucial to your success in connecting to your AWS Lambda function.

...

Resource:

- !Sub "arn:aws:rds-db:${AWS::Region}:${AWS::AccountId}:dbuser:${DBResourceId}/${DBUsername}"

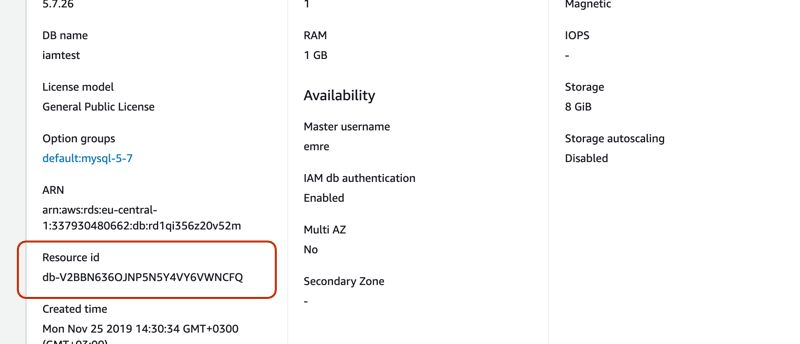

...Here DBResourceId is also important! It is not the DB instance identifier which I made the mistake to assume it as that in my first try. It is the Resource id of your RDS instance which you can find under the Configuration tab just below the arn on your DB instance details on Amazon RDS Console.

AWS Lambda function code

Imported libraries

Firstly, we will import the libraries our function will need as below.

import boto3

import logging

import pymysql

import osboto3is included when you create a Python AWS Lambda function automatically. We will use it later to get our authentication token from RDS.loggingandosare among Python’s core libraries. We will useloggingto configure our function logger.oslibrary will allow us to access the environment variables of the Lambda function.pymysqlis the library we use to create MySQL connections to our RDS instances. This time, we used an AWS Lambda layer to package it. As I mentined before, we install the dependencies underpythonfolder. Because AWS Lambda will install all content under theDependenciesfolder to the/optdirectory during the function runtime. Besides, it will support all libraries under the Python folder.

Getting Authentication Token from RDS

As we talked in our previous blog posts, it is a best practice to put the connection logic before the handler. By doing this, you allow all subsequent functions to use the same connection instead of creating their own ones.

While using IAM authentication, we do not use passwords. Instead, we use short-lived authentication tokens. Therefore, we need a way to get our tokens in runtime, right?

Exactly! This is where we use Boto3, the Python SDK of AWS. We initialize an rds client and receive our token using generate_db_auth_token method. It has three required parameters in this order:

- DB endpoint

- DB port

- DB user

# Get authentication token from RDS

rds_client = boto3.client('rds')

auth_token = rds_client.generate_db_auth_token( os.environ['DB_HOST'], 3306, os.environ['DB_USER'] )

logger.debug( "response from generate_db_auth_token")

logger.debug( auth_token )This method will return an authentication token in the form of a signed url. Then we will use this token to create the db connection.

Creating the MySQL connection

# Construct SSL

ssl = {'ca': '/opt/python/rds-combined-ca-bundle.pem'}

# Create connection

db_connection = pymysql.connect(

host=os.environ['DB_HOST'],

port=3306,

user=os.environ['DB_USER'],

passwd=auth_token,

db=os.environ['DB_NAME'],

charset='utf8',

ssl=ssl,

connect_timeout=5)

logger.debug("SUCCESS: Connection to MySQL database succeeded")As you see, firstly we construct an SSL Python dictionary. If you remember, I said that AWS Lambda installs all content in our DependenciesLayer under /opt driectory at runtime. Therefore, we can access our SSL certificate from /opt/python/rds-combined-ca-bundle.pem path.

But what about placing the certificate under another directory like certs instead of python? Actually, I tried this as well. The function times out, I think it is because it can only access the contents under /opt/python folder.

While creating the connection, we provide the authentication token we received from RDS as password as well as the ssl certificate. Then pymysql uses this certificate in runtime.

Sample function code in full

The sample code of our AWS Lambda function is as below. The rest is simple. We have a select query and we execute it using IAM authentication. Of course, you can use any logic in your functions as you like.

import boto3

import logging

import pymysql

import json

import os

# Logger settings - CloudWatch

logger = logging.getLogger()

logger.setLevel(logging.DEBUG)

# Get authentication token from RDS

rds_client = boto3.client('rds')

auth_token = rds_client.generate_db_auth_token( os.environ['DB_HOST'], 3306, os.environ['DB_USER'] )

logger.debug( "response from generate_db_auth_token")

logger.debug( auth_token )

# Conmstruct SSL

ssl = {'ca': '/opt/python/rds-combined-ca-bundle.pem'}

# Create connection

db_connection = pymysql.connect(

host=os.environ['DB_HOST'],

port=3306,

user=os.environ['DB_USER'],

passwd=auth_token,

db=os.environ['DB_NAME'],

charset='utf8',

ssl=ssl,

connect_timeout=5)

logger.debug("SUCCESS: Connection to MySQL database succeeded")

def lambda_handler(event, context):

"""

Main entry of the AWS Lambda function.

"""

logger.info("Hello world!")

return prepare_and_execute_query()

def prepare_and_execute_query():

"""

Execute the query on the MySQL database

"""

with db_connection.cursor(pymysql.cursors.DictCursor) as cur:

try:

sql_query = "SELECT * FROM items;"

items = cur.execute(sql_query)

db_connection.commit()

return items

except Exception as e:

db_connection.rollback()

raise eDeploying the function

I often use bash scripts to deploy my SAM projects. This enables me to change the parameters easily. Of course, in production-level applications, I mostly create CI/CD pipelines using CodePipeline, CodeBuild and CloudFormation and recommend you to do so.

I place the deployment script in parent directory not to check out it to code repository. So its contents are as below.

#!/bin/bash

STACK_NAME="RdsIamSampleAppStack"

LAMBDA_SG="sg-xxxxxx"

LAMBDA_SUBNETS="subnet-xxx,subnet-yyy"

SAM_BUCKET="<an-s3-bucket-for-sam>"

DB_ENDPOINT="rd1qi356z20v52m.clllclirsx1f.eu-central-1.rds.amazonaws.com"

DB_USER="lambda-user"

DB_NAME="iamtest"

REGION="eu-central-1"

DB_RESOURCE_ID="db-V2BBN636OJNP5N5Y4VY6VWNCFQ"

cd rds-iam-sample-app

# Package the project

sam package --template-file template.yaml --s3-bucket $SAM_BUCKET --output-template-file packaged-serverless.yaml --region $REGION

# Deploy the project

sam deploy --template-file ./packaged-serverless.yaml --stack-name $STACK_NAME \

--parameter-overrides LambdaSg=$LAMBDA_SG LambdaSubnets=$LAMBDA_SUBNETS \

DBResourceId=$DB_RESOURCE_ID DBEndpoint=$DB_ENDPOINT DBName=$DB_NAME DBUsername=$DB_USER \

--capabilities CAPABILITY_IAM --region $REGIONI set some parameters at the top. So it is easy to edit them. The others are standard SAM tasks: package and deploy. I execute this script when I need to re-deploy. It may be useful for you, too.

Conclusion

Using IAM authentication while connecting to a MySQL database on RDS is not common, but a relatively new and secure method. The idea of using short-lived tokens is really awesome.

But due to its limitations on the number of connections per second, I think it may be more feasible to use AWS Secrets Manager to rotate our DB credentials. We can talk about it in the future as well.

By the way, the connection logic described in this post also applies if you have applications running in an Amazon EC2 instance as well as an AWS Fargate or Amazon ECS task. You should configure your DB instance and users, get authentication token programmatically using the AWS SDK and connect the database using SSL.

Once again it was a long post. Thanks for your time to read it!

If you need help in your projects, you can contact me using the contact form on Shikisoft website. I will be happy to see what we can build together!

References

- AWS Lambda Layers - AWS Documentation

- Using SSL/TLS to Encrypt a Connection to a DB Instance - Amazon RDS Documentation

- Using SSL with a MySQL DB Instance - Amazon RDS Documentation

- How do I allow users to connect to Amazon RDS with IAM credentials? - AWS Knowledge Center

- RDS.Client.generate_db_auth_token - Boto3 Documentation

- Connection Object - PyMySQL Documentation

- Creating and Using an IAM Policy for IAM Database Access - Amazon RDS Documentation

- AWS::Serverless::Function - AWS SAM Reference